Comparison of Web Frameworks (Spring Boot Vs Vert.x)

Multi-threaded Model of Spring Boot

This model is known to everyone. Our application creates a number of threads (pool), passing each of them a task and data for processing. Tasks are performed in parallel. If the threads do not have shared data, then we will not have the overhead of synchronization, which makes the work fast enough.

After the work is completed, the thread is not killed, but lies in the pool, waiting for the next task. This removes the overhead of creating and deleting threads. It is on such a system that the web server works with Spring Boot. Each request works in its own thread. One thread processes one request.

We have a fairly large number of threads, slow requests take the stream for a long time, and fast requests are processed almost instantly, freeing the stream for other work. This does not allow slow requests to take all the CPU time, forcing fast requests to hang. But such a system has certain limitations. A situation may arise when a large number of slow queries come to us, for example, working with a database or file system. Such requests will take away all the threads, which will make it impossible to execute other requests. Even if the request only needs 1ms to execute – it will not be processed on time. This can be solved by increasing the number of threads so that they can process a sufficiently large number of slow requests. But unfortunately, the threads are processed by the OS, and CPU time is also allocated to it.

Therefore, the more threads we create, the greater the overhead for processing them and the less processor time is allocated to each thread. The situation is exacerbated by Spring itself – blocking database operations, file system, input-output also spend CPU time, without performing any useful work at this moment. And the more flows – the more of these costs. As a result, we get quite a lot of downtime. This time depends on the duration of queries to the database and file system. The best solution that allows us to completely load the processor with useful work is the transition to an architecture that uses non-blocking operations.

Asynchronous Model of Vert.x

A less common model than a multi-threaded, but with no less possibilities. The asynchronous model is built on an event queue (event-loop). When an event occurs (a request came, a file was read, a response came from the database) it is placed at the end of the queue. The thread that processes this queue, takes the event from the beginning of the queue and executes the code associated with this event. While the queue is not empty, the processor will be busy.

According to this scheme works Vert.x. We have a single thread that processes the event queue (with the cluster module, there will be more than one stream). Almost all operations are non-blocking. Blocking devices are also available, but their use is highly discouraged. Then you will understand why.

Let’s take the same example with the query 1 + 2 + 1ms: from the message queue, an event associated with the arrival of the request is taken. We process the request, spend 1ms. Next, an asynchronous non-blocking database request is made and control is immediately passed on. We can take the next event from the queue and execute it. For example, we will take another 1 request, process it, send a request to the database, return control and do the same one more time. And here comes the response of the database to the very first request.

The event associated with it is placed in the queue. If there was nothing in the queue – it will be executed immediately, the data will be rendered and given back to the client. If there is something in the queue, you will have to wait for the processing of other events. Usually, the speed of processing a single request will be comparable to the speed of processing a multi-threaded system and blocking operations. In the worst case, waiting for the processing of other events will take time and the request will be processed more slowly.

But then, while the system with blocking operations just waited for 2ms of response, the system with non-blocking operations managed to perform 2 more parts of 2 other requests! Each request may be a little slower overall, but we can process many more requests per unit of time. Overall performance will be higher. The processor will always be busy with useful work. At the same time, much less time is spent on processing the queue and transition from event to event than on switching between threads in a multi-threaded system.

Therefore, asynchronous systems with non-blocking operations must have no more threads than the number of cores in the system. A multi-threading system with blocking operations has a lot of downtime. An excessive number of threads can create a lot of overhead, while an insufficient amount can lead to slower work with a large number of slow queries. An asynchronous non-blocking application uses CPU time more efficiently but is more difficult to design.

This especially affects memory leaks – the Vert.x process can run for a very large amount of time, and if the programmer doesn’t take care of clearing the data after processing each request, we’ll get a leak, which will eventually lead to the need to restart the server. There is also an asynchronous architecture with blocking operations, but it is much less profitable, which can be seen further in some examples.

Let us highlight the features that need to be considered when developing asynchronous applications and analyze some errors that people have when trying to deal with the features of the asynchronous architecture.

| Spring Boot | Vert.x |

|

-Managed by Pivotal -Only JVM Languages -Opinionated & heavyweight -Spring has a bigger ecosystem and support for Spring is huge -Spring Boot is not reactive by default -Not modular |

-Under Eclipse Foundation now(VMware initially) -Polyglot(U can use various languages in your services) -Very raw and lightweight -The testing asynchronous code might be sometimes tedious -Reactive -Simple concurrency model -Follow module system |

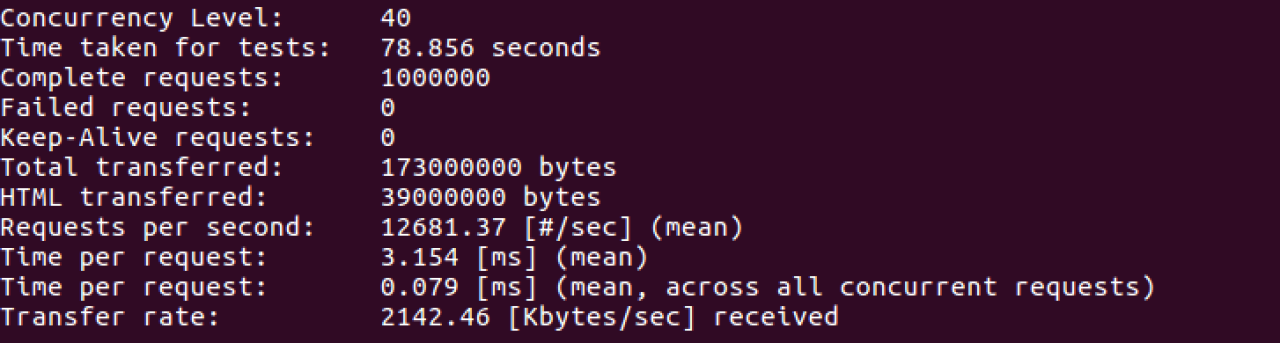

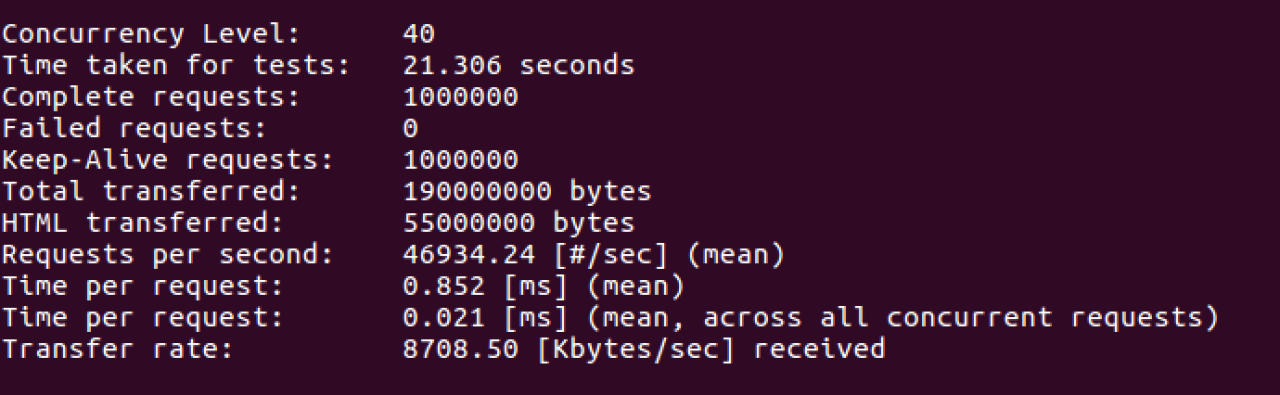

So, we talk about the most important features of the frameworks, but how our applications will be work in production. For our performance test, we decided to use Apache AB testing tool. This tool should help us to benchmark Spring Boot and Vert.x (HTTP) servers.

Let’s start with Spring Boot. We should create a single REST API application.

It’s configuration.

@Configuration @ComponentScan @EnableAutoConfiguration public class Application { public static void main(String[] args) { SpringApplication.run(Application.class, args); } }

Simple POJO class.

public class Widget {

private String type;

private int length;

private int height;

public Widget(String type, int length, int height) {

this.type = type;

this.length = length;

this.height = height;

}

public String getType() {

return type;

}

public int getLength() {

return length;

}

public int getHeight() {

return height;

}

}

REST Controller.

@RequestMapping("/api/**")

@RestController

public class WidgetController {

@RequestMapping(method = RequestMethod.GET, produces = {MediaType.APPLICATION_JSON_VALUE})

public Widget index() {

return new Widget("green", 10, 7);

}

}

So in Vert.x it should be.

public class Server extends AbstractVerticle {

@Override

public void start() {

HttpServer server = vertx.createHttpServer();

Router router = Router.router(vertx);

router.route(HttpMethod.POST, "/api").handler(routingContext -> {

HttpServerRequest req = routingContext.request();

req.bodyHandler(buffer -> {

Widget widget = Json.decodeValue(buffer.toString(), Widget.class);

routingContext.response().end(Json.encode(widget));

});

});

server.requestHandler(router::accept).listen(8080);

And the POJO class will be similar.

Start our services one by one and fire up the Apache ab test from the official apache.com page. The performance test will simulate:

40 concurrent users

1 000 000 requests

Here are the results of our tests:

Spring Boot

Vert.x

This load testing which was executed have been performed on a virtual machine with the following software:

OS: Ubuntu 16.04 LTS

CPU: Intel(R) Quad Core(TM) i5 @ 3.40GHz

Memory: 16GB DDR4

(26 votes, average: 4.15 out of 5)

(26 votes, average: 4.15 out of 5)

Comments are closed.